Tech

The secret police: Local and federal cops built a shadowy surveillance machine in Minnesota after George Floyd’s murder

Published

3 years agoon

By

Terry Power

The night of April 11, activists gathered outside the Brooklyn Center Police Department in defiance of a curfew. The police station was quickly fortified by fencing and barriers. Police made liberal use of tear gas over several nights of protests; it wafted into apartment buildings surrounding the police station and injured several residents. The lights on the station were turned off in an effort to make it harder for protesters to see and target officers. News reports estimated that 100 protesters encountered hundreds of police officers, as well as approximately 100 National Guard members. Around 30 arrests were made.

The next day, school was canceled. In response to the chaos of the previous night, the Brooklyn Center City Council hurried to pass a resolution banning aggressive police tactics such as rubber bullets, tear gas, and “kettling,” in which groups of protesters are blocked into a confined space. A curfew was also put into effect from 7 p.m. to 6 a.m. The council’s resolution went into effect by nightfall on the 12th, but police continued using the banned tactics and munitions. That night, approximately 20 businesses in the area were broken into.

As part of the operation, Minneapolis Police also summoned helicopters from Customs and Border Protection (part of the US Department of Homeland Security). The presence of circling aircraft would become a hallmark of Operation Safety Net. During the peak of the protests, the helicopters came and went from a difficult-to-access industrial area near the Mississippi River between Brooklyn Center and Minneapolis, flying at high altitudes to avoid detection.

On at least two nights during the height of the protests, which spanned nearly 10 days, law enforcement briefly detained and took detailed photographs of credentialed members of the press who were covering the events.

The ACLU, along with pro bono lawyers from private law firms Fredrikson & Byron P.A. and Apollo Law, recently settled a class action lawsuit against the city over its treatment of journalists during the protests. The settlement requires the city to pay over $800,000 to injured journalists, and a federal judge ordered an injunction lasting six years that prohibits Minnesota policing agencies from attacking and arresting journalists, or ordering them to disperse from the scene of a protest.

On April 15, more than 75 community organizations, including the ACLU, issued a joint statement calling for the state to end OSN. “The state’s use of force against Minnesotans exercising their First Amendment rights in Brooklyn Center and militarization of our cities in response to police violence is wrong, traumatizing, and adding to the public health crisis of COVID, police brutality, and systemic racism,” the statement read. It called out the “continued use of militaristic tools of oppression to intimidate and halt peaceful, if justifiably angry, protest.” The NAACP also called for a stop to Operation Safety Net via Twitter.

The Minneapolis Legislative Delegation, a group of state legislators, sent a letter to Minnesota governor Tim Walz condemning OSN and asking for a “reevaluation of tactics.” Congresswoman Ilhan Omar also criticized OSN, likening it to “a military occupation” and calling on Walz and Minneapolis mayor Jacob Frey to “stop terrorizing people who are protesting the brutality of state sanctioned violence.” On April 22, the US Department of Justice announced an investigation into the Minneapolis Police Department, citing a possible pattern of excessive use of force including in response to protests. The investigation is ongoing.

All told, the operation cost tens of millions of public dollars, paid by the participating agencies. The Minnesota State Patrol alone paid $1,048,946.57, according to an email sent to MIT Technology Review, and the Minnesota National Guard estimated that its role cost at least $25 million.

Despite the public costs, the detentions, and the criticism, however, most details of OSN’s attempts to surveil the public remained secret.

Surveillance tools

As part of our investigation, MIT Technology Review obtained a watch list used by the agencies in the operation that includes photos and personal information identifying journalists and other people “doing nothing more than exercising their constitutional rights,” according to Lieta Walker, a lawyer representing journalists arrested in the protests who has examined the list. It was compiled by the Criminal Intelligence Division of the Hennepin County Sheriff’s Office—one of the groups participating in OSN—and included people arrested by the Minnesota State Patrol, another participant.

The Minnesota State Patrol and Minneapolis Police Department both told MIT Technology Review in an email that they were not aware of the document and Hennepin County Sheriff’s Office did not reply to multiple requests for comment.

OSN also used a real-time data-sharing tool called Intrepid Response, which is sold on a subscription basis by AT&T. It’s much like a Slack for SWAT: at the press of a button, images, video (including footage captured by drones), geolocations of team members and targets, and other data can be instantly shared between field teams and command center staff. Credentialed members of the press who were covering the unrest in Brooklyn Center were temporarily detained and photographed, and those photos were uploaded into the Intrepid Response system.

Although the State Patrol denied numerous records requests from MIT Technology Review regarding the detention and photographing of journalists, photojournalist J.D. Duggan was able to obtain his personal file—a total of three pages of material. The information Duggan obtained illuminates the extent of law enforcement’s efforts to track individuals in real time: the pages include photos of his face, body, and press badge, surrounded by time stamps and maps showing the location of his brief detention.

Previous reporting has shown that policing agencies participating in OSN also had access to many other technological surveillance tools, including a face recognition system made by the controversial firm Clearview AI, cell site simulators for cell-phone surveillance, license plate readers, and drones. Extensive social media intelligence gathering was a core part of OSN as well.

Drones were also used during the earlier protests following Floyd’s murder, when a Predator operated by US Customs and Border Patrol—a technology typically used to monitor battlefields in Afghanistan, Iraq, and elsewhere—was spotted flying over the city. Interestingly, the drone flight and two National Guard spy plane flights revealed that the aerial surveillance technology the police already owned was actually superior. In a report, the inspector general of the US Air Force said, “Minnesota State Police transmitted their helicopter images … and noted the police imagery was much better quality” than that provided by the RC-26 spy planes the military operated over Minneapolis in the first week of June 2020. Police also issued a warrant to obtain Google geolocation information of people involved in the protests in May 2020.

The intelligence teams

In total, OSN would require officers from nine agencies in Minnesota, 120 out-of-state supporting officers, and at least 3,000 National Guard soldiers. The surveillance tools were managed by several different intelligence groups that collaborated throughout the operation. The structure of these intelligence teams, the personnel, and the extent of the involvement of federal agencies have not previously been reported.

In the same area where helicopters from federal agencies were surreptitiously taking off and landing is a facility known as the Strategic Information Center. The SIC, as it’s called, was a central planning site for Operation Safety Net and also functions as an intelligence analysis hub, known as a “fusion center,” for the Minneapolis Police Department. The facility contains the latest technology and is plugged into citywide camera feeds and data-sharing systems. The SIC featured prominently in documents reviewed for this investigation and was used routinely by OSN leaders to coordinate field operations and intelligence work.

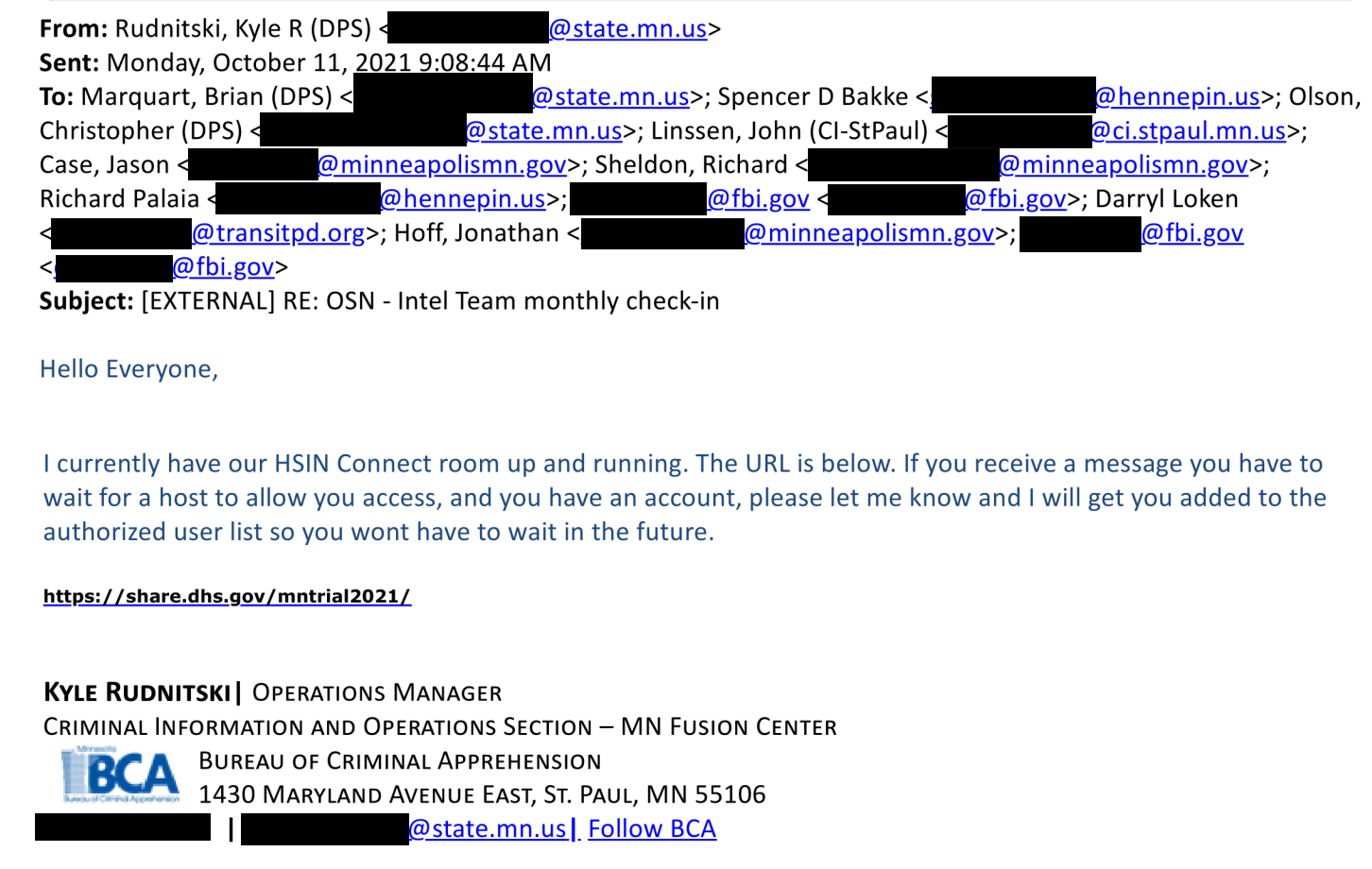

Emails obtained through public records requests shed light on an “intel team” within Operation Safety Net. It was made up of at least 12 people from agencies including the Minneapolis and St. Paul police, the Hennepin County sheriff, the Minnesota Department of Public Safety and Metro Transit, and the FBI. The intel team used the Homeland Security Information Network (HSIN), run by the US Department of Homeland Security, to share information and appears to have met regularly through at least October 2021. The network offers access to facial recognition technology, though Bruce Gordon, director of communications at the Minnesota Department of Public Safety, told MIT Technology Review in an email that the state Bureau of Criminal Apprehension’s (BCA) fusion center “does not own or use facial recognition technology.”

Our investigation shows clear and substantial involvement of federal agencies at the highest level of Operation Safety Net, with four FBI agents included in the executive team of operation in addition to the two on the intel team. Federal agents had also been deployed to several cities, including New York and Seattle, during the 2020 Black Lives Matter protests. In Portland, Oregon, the FBI launched a months-long surveillance operation which involved covertly filming activists. On June 2, 2020, the deputy director of the FBI David Bowdich released a memo encouraging aggressive surveillance of the activists, calling the protest movement “a national crisis.” The Department of Homeland Security also deployed around 200 personnel to cities around the US, with most reporting to Portland.

Kyle Rudnitski, listed as an operations manager at the BCA fusion center in his email signature, acted as the administrator of HSIN for the intel team and the host for planning meetings. Rudnitski appeared to also be responsible for managing account permissions for the team.

The BCA’s fusion center is the primary data-sharing center for Minnesota, but there are several operated by other law enforcement entities throughout the state. The facility is staffed by criminal intelligence analysts and others who run a constellation of intelligence-gathering tools and reporting networks.

Fusion centers are intelligence-sharing and analysis hubs, spread throughout the country, that bring together intelligence from local, state, federal, and other sources. These centers were widely set up in the wake of the 9/11 terror attacks to consolidate intelligence and more rapidly assess threats to national security. According to the Department of Homeland Security’s website, these centers are intended to “increase collaboration” between agencies through data sharing. The centers are staffed by multiple police agencies, federal law enforcement and National Guard personnel, and sometimes contractors. The proliferation of these centers has come under intense scrutiny for raising the risk of abusive policing practices.

“Instead of looking for terrorist threats, fusion centers were monitoring lawful political and religious activity. The Virginia Fusion Center described a Muslim get-out–the-vote campaign as ‘subversive,’” reads a 2012 report from the Brennan Center, a law and policy think tank. “In 2009, the North Central Texas Fusion Center identified lobbying by Muslim groups as a possible threat. The DHS dismissed these as isolated episodes, but a two-year Senate investigation found that such tactics were hardly rare. It concluded that fusion centers routinely produce ‘irrelevant, useless, or inappropriate’ intelligence that endangers civil liberties.”

“Anonymity is a shield”

In February 2022, policing in Minnesota again became a focus for protests after Minneapolis police shot and killed Amir Locke, a 22-year-old Black man who appeared to be sleeping on a couch when officers executed a no-knock warrant as part of a homicide investigation. Locke was not a suspect in the homicide, as initial police press releases about the incidents falsely claimed.

Despite public statements that OSN was in “phase four” as of April 22, 2021—the final phase, in which the operation would “demobilize,” according to statements given during the initial press conference—it appears that the program was still ongoing when Locke was killed. Documents obtained by MIT Technology Review show that regular planning meetings, secured chat rooms, and the sharing and updating of operation documents remained in effect through at least October.

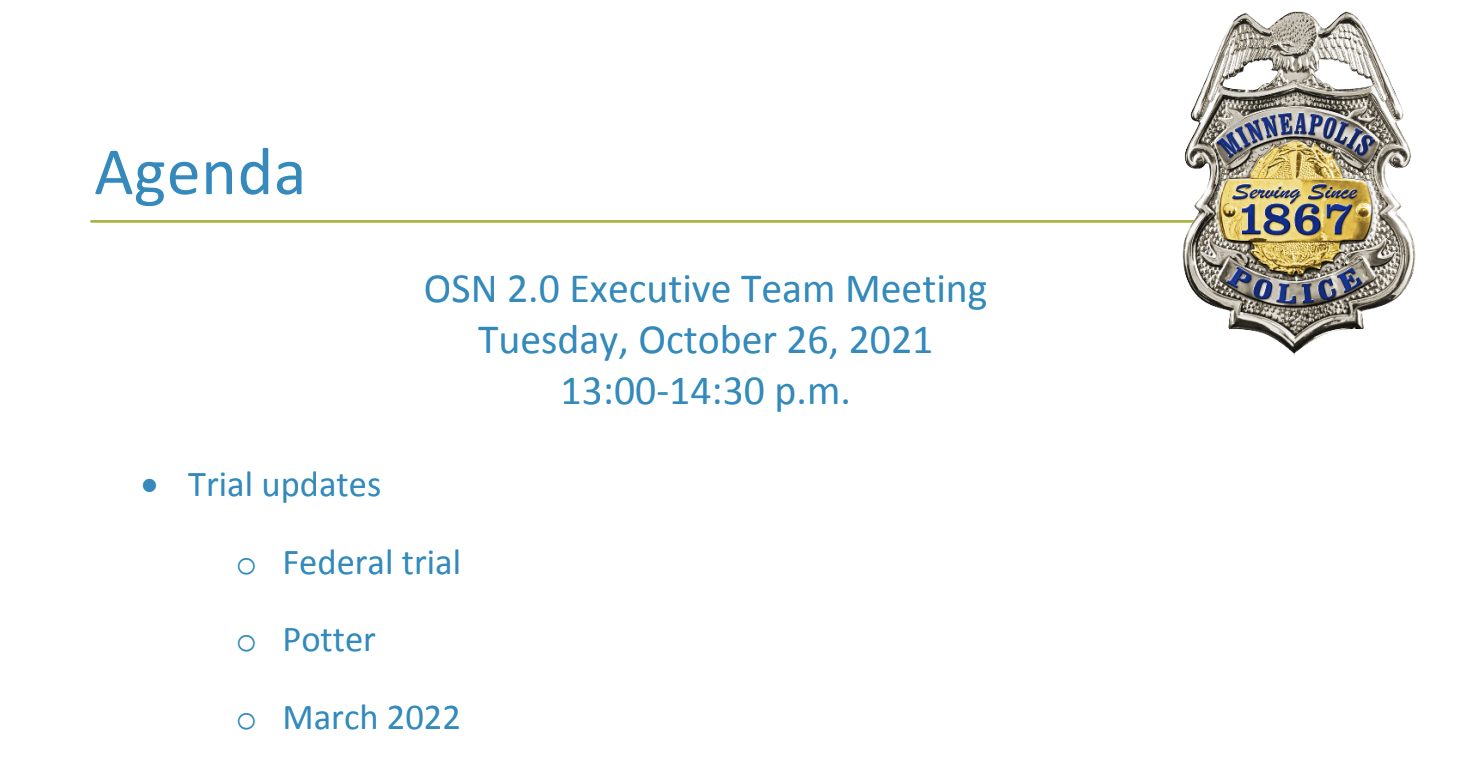

The emails also contained details about a meeting on October 26, 2021, for the “OSN 2.0 Executive Team” that included among its agenda items “Potter Trial,” referencing the trial of Kim Potter in December, and “March 2022.” The FBI was included in the OSN 2.0 Executive Team emails.

“There never has been, nor is there now, an ‘OSN 2.0,’” Gordon told MIT Technology Review in email. “Any reference was an informal way of notifying state, local and federal partners that planning would take place … the Minnesota Fusion Center continues to share threat assessment information with law enforcement agencies in keeping with its mission. This was not unique to the time during which OSN existed.” Gordon also disputed the characterization that OSN itself amounted to large-scale surveillance activity.

On Thursday, February 24, the three other officers on the scene when Chauvin murdered George Floyd were found guilty of federal crimes for a violating Floyd’s civil rights, though they still await a state trial.

The events in Minnesota have ushered in a new era of protest policing. Protests that were intended to call attention to the injustices committed by police effectively served as an opportunity for those police forces to consolidate power, bolster their inventories, solidify relationships with federal forces, and update their technology and training to achieve a far more powerful, interconnected surveillance apparatus. Entirely new titles and positions were created within the Minneapolis Police Department and the aviation section of the Minnesota State Patrol that leverage new surveillance technologies and methods, which will be explained in detail in this investigative series.

Anonymity is an important though muddy tenet of free speech. In a landmark 1995 Supreme Court case, McIntyre v. Ohio, the court declared that “anonymity is a shield from the tyranny of the majority.” Clare Garvie, a senior associate with the Georgetown Law Center on Privacy & Technology, says the case established that “to hold an unpopular speech and to be free to express that necessarily requires a degree of anonymity.” Though police do have the right to do things like take photographs at protests, Garvie says, “law enforcement does not have the right to walk through a protest and demand that everybody show their ID.”

But a wild proliferation of technologies and tools have recently made such anonymous free speech nearly impossible in the United States. This series will provide a rare glimpse behind the curtain during a transformative time for policing and public demonstration in the US.

You may like

-

Successfully deploying machine learning

-

The open-source AI boom is built on Big Tech’s handouts. How long will it last?

-

Ensuring Restful Nights: CPAP Machine Cleaning and Sanitizing to Manage Sleep Apnea

-

Toxic Metals In Some Common Beverages Surpass Federal Drinking Water Standards: Study

-

White House: COVID-19 Vaccination Requirement ‘No Longer Necessary’ For Travelers, Federal Employees

-

Dog Flu Outbreak: Minnesota Releases New Guidelines As Cases Pile Up

My senior spring in high school, I decided to defer my MIT enrollment by a year. I had always planned to take a gap year, but after receiving the silver tube in the mail and seeing all my college-bound friends plan out their classes and dorm decor, I got cold feet. Every time I mentioned my plans, I was met with questions like “But what about school?” and “MIT is cool with this?”

Yeah. MIT totally is. Postponing your MIT start date is as simple as clicking a checkbox.

COURTESY PHOTO

Now, having finished my first year of classes, I’m really grateful that I stuck with my decision to delay MIT, as I realized that having a full year of unstructured time is a gift. I could let my creative juices run. Pick up hobbies for fun. Do cool things like work at an AI startup and teach myself how to create latte art. My favorite part of the year, however, was backpacking across Europe. I traveled through Austria, Slovakia, Russia, Spain, France, the UK, Greece, Italy, Germany, Poland, Romania, and Hungary.

Moreover, despite my fear that I’d be losing a valuable year, traveling turned out to be the most productive thing I could have done with my time. I got to explore different cultures, meet new people from all over the world, and gain unique perspectives that I couldn’t have gotten otherwise. My travels throughout Europe allowed me to leave my comfort zone and expand my understanding of the greater human experience.

“In Iceland there’s less focus on hustle culture, and this relaxed approach to work-life balance ends up fostering creativity. This was a wild revelation to a bunch of MIT students.”

When I became a full-time student last fall, I realized that StartLabs, the premier undergraduate entrepreneurship club on campus, gives MIT undergrads a similar opportunity to expand their horizons and experience new things. I immediately signed up. At StartLabs, we host fireside chats and ideathons throughout the year. But our flagship event is our annual TechTrek over spring break. In previous years, StartLabs has gone on TechTrek trips to Germany, Switzerland, and Israel. On these fully funded trips, StartLabs members have visited and collaborated with industry leaders, incubators, startups, and academic institutions. They take these treks both to connect with the global startup sphere and to build closer relationships within the club itself.

Most important, however, the process of organizing the TechTrek is itself an expedited introduction to entrepreneurship. The trip is entirely planned by StartLabs members; we figure out travel logistics, find sponsors, and then discover ways to optimize our funding.

COURTESY PHOTO

In organizing this year’s trip to Iceland, we had to learn how to delegate roles to all the planners and how to maintain morale when making this trip a reality seemed to be an impossible task. We woke up extra early to take 6 a.m. calls with Icelandic founders and sponsors. We came up with options for different levels of sponsorship, used pattern recognition to deduce the email addresses of hundreds of potential contacts at organizations we wanted to visit, and all got scrappy with utilizing our LinkedIn connections.

And as any good entrepreneur must, we had to learn how to be lean and maximize our resources. To stretch our food budget, we planned all our incubator and company visits around lunchtime in hopes of getting fed, played human Tetris as we fit 16 people into a six-person Airbnb, and emailed grocery stores to get their nearly expired foods for a discount. We even made a deal with the local bus company to give us free tickets in exchange for a story post on our Instagram account.

Tech

The Download: spying keyboard software, and why boring AI is best

Published

2 years agoon

22 August 2023By

Terry Power

This is today’s edition of The Download, our weekday newsletter that provides a daily dose of what’s going on in the world of technology.

How ubiquitous keyboard software puts hundreds of millions of Chinese users at risk

For millions of Chinese people, the first software they download onto devices is always the same: a keyboard app. Yet few of them are aware that it may make everything they type vulnerable to spying eyes.

QWERTY keyboards are inefficient as many Chinese characters share the same latinized spelling. As a result, many switch to smart, localized keyboard apps to save time and frustration. Today, over 800 million Chinese people use third-party keyboard apps on their PCs, laptops, and mobile phones.

But a recent report by the Citizen Lab, a University of Toronto–affiliated research group, revealed that Sogou, one of the most popular Chinese keyboard apps, had a massive security loophole. Read the full story.

—Zeyi Yang

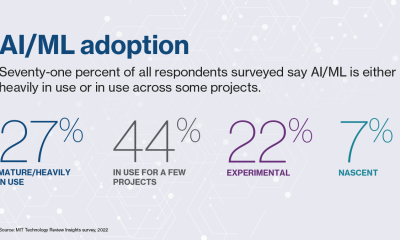

Why we should all be rooting for boring AI

Earlier this month, the US Department of Defense announced it is setting up a Generative AI Task Force, aimed at “analyzing and integrating” AI tools such as large language models across the department. It hopes they could improve intelligence and operational planning.

But those might not be the right use cases, writes our senior AI reporter Melissa Heikkila. Generative AI tools, such as language models, are glitchy and unpredictable, and they make things up. They also have massive security vulnerabilities, privacy problems, and deeply ingrained biases.

Applying these technologies in high-stakes settings could lead to deadly accidents where it’s unclear who or what should be held responsible, or even why the problem occurred. The DoD’s best bet is to apply generative AI to more mundane things like Excel, email, or word processing. Read the full story.

This story is from The Algorithm, Melissa’s weekly newsletter giving you the inside track on all things AI. Sign up to receive it in your inbox every Monday.

The ice cores that will let us look 1.5 million years into the past

To better understand the role atmospheric carbon dioxide plays in Earth’s climate cycles, scientists have long turned to ice cores drilled in Antarctica, where snow layers accumulate and compact over hundreds of thousands of years, trapping samples of ancient air in a lattice of bubbles that serve as tiny time capsules.

By analyzing those cores, scientists can connect greenhouse-gas concentrations with temperatures going back 800,000 years. Now, a new European-led initiative hopes to eventually retrieve the oldest core yet, dating back 1.5 million years. But that impressive feat is still only the first step. Once they’ve done that, they’ll have to figure out how they’re going to extract the air from the ice. Read the full story.

—Christian Elliott

This story is from the latest edition of our print magazine, set to go live tomorrow. Subscribe today for as low as $8/month to ensure you receive full access to the new Ethics issue and in-depth stories on experimental drugs, AI assisted warfare, microfinance, and more.

The must-reads

I’ve combed the internet to find you today’s most fun/important/scary/fascinating stories about technology.

1 How AI got dragged into the culture wars

Fears about ‘woke’ AI fundamentally misunderstand how it works. Yet they’re gaining traction. (The Guardian)

+ Why it’s impossible to build an unbiased AI language model. (MIT Technology Review)

2 Researchers are racing to understand a new coronavirus variant

It’s unlikely to be cause for concern, but it shows this virus still has plenty of tricks up its sleeve. (Nature)

+ Covid hasn’t entirely gone away—here’s where we stand. (MIT Technology Review)

+ Why we can’t afford to stop monitoring it. (Ars Technica)

3 How Hilary became such a monster storm

Much of it is down to unusually hot sea surface temperatures. (Wired $)

+ The era of simultaneous climate disasters is here to stay. (Axios)

+ People are donning cooling vests so they can work through the heat. (Wired $)

4 Brain privacy is set to become important

Scientists are getting better at decoding our brain data. It’s surely only a matter of time before others want a peek. (The Atlantic $)

+ How your brain data could be used against you. (MIT Technology Review)

5 How Nvidia built such a big competitive advantage in AI chips

Today it accounts for 70% of all AI chip sales—and an even greater share for training generative models. (NYT $)

+ The chips it’s selling to China are less effective due to US export controls. (Ars Technica)

+ These simple design rules could turn the chip industry on its head. (MIT Technology Review)

6 Inside the complex world of dissociative identity disorder on TikTok

Reducing stigma is great, but doctors fear people are self-diagnosing or even imitating the disorder. (The Verge)

7 What TikTok might have to give up to keep operating in the US

This shows just how hollow the authorities’ purported data-collection concerns really are. (Forbes)

8 Soldiers in Ukraine are playing World of Tanks on their phones

It’s eerily similar to the war they are themselves fighting, but they say it helps them to dissociate from the horror. (NYT $)

9 Conspiracy theorists are sharing mad ideas on what causes wildfires

But it’s all just a convoluted way to try to avoid having to tackle climate change. (Slate $)

10 Christie’s accidentally leaked the location of tons of valuable art

Seemingly thanks to the metadata that often automatically attaches to smartphone photos. (WP $)

Quote of the day

“Is it going to take people dying for something to move forward?”

—An anonymous air traffic controller warns that staffing shortages in their industry, plus other factors, are starting to threaten passenger safety, the New York Times reports.

The big story

Inside effective altruism, where the far future counts a lot more than the present

October 2022

Since its birth in the late 2000s, effective altruism has aimed to answer the question “How can those with means have the most impact on the world in a quantifiable way?”—and supplied methods for calculating the answer.

It’s no surprise that effective altruisms’ ideas have long faced criticism for reflecting white Western saviorism, alongside an avoidance of structural problems in favor of abstract math. And as believers pour even greater amounts of money into the movement’s increasingly sci-fi ideals, such charges are only intensifying. Read the full story.

—Rebecca Ackermann

We can still have nice things

A place for comfort, fun and distraction in these weird times. (Got any ideas? Drop me a line or tweet ’em at me.)

+ Watch Andrew Scott’s electrifying reading of the 1965 commencement address ‘Choose One of Five’ by Edith Sampson.

+ Here’s how Metallica makes sure its live performances ROCK. ($)

+ Cannot deal with this utterly ludicrous wooden vehicle.

+ Learn about a weird and wonderful new instrument called a harpejji.

Tech

Why we should all be rooting for boring AI

Published

2 years agoon

22 August 2023By

Terry Power

This story originally appeared in The Algorithm, our weekly newsletter on AI. To get stories like this in your inbox first, sign up here.

I’m back from a wholesome week off picking blueberries in a forest. So this story we published last week about the messy ethics of AI in warfare is just the antidote, bringing my blood pressure right back up again.

Arthur Holland Michel does a great job looking at the complicated and nuanced ethical questions around warfare and the military’s increasing use of artificial-intelligence tools. There are myriad ways AI could fail catastrophically or be abused in conflict situations, and there don’t seem to be any real rules constraining it yet. Holland Michel’s story illustrates how little there is to hold people accountable when things go wrong.

Last year I wrote about how the war in Ukraine kick-started a new boom in business for defense AI startups. The latest hype cycle has only added to that, as companies—and now the military too—race to embed generative AI in products and services.

Earlier this month, the US Department of Defense announced it is setting up a Generative AI Task Force, aimed at “analyzing and integrating” AI tools such as large language models across the department.

The department sees tons of potential to “improve intelligence, operational planning, and administrative and business processes.”

But Holland Michel’s story highlights why the first two use cases might be a bad idea. Generative AI tools, such as language models, are glitchy and unpredictable, and they make things up. They also have massive security vulnerabilities, privacy problems, and deeply ingrained biases.

Applying these technologies in high-stakes settings could lead to deadly accidents where it’s unclear who or what should be held responsible, or even why the problem occurred. Everyone agrees that humans should make the final call, but that is made harder by technology that acts unpredictably, especially in fast-moving conflict situations.

Some worry that the people lowest on the hierarchy will pay the highest price when things go wrong: “In the event of an accident—regardless of whether the human was wrong, the computer was wrong, or they were wrong together—the person who made the ‘decision’ will absorb the blame and protect everyone else along the chain of command from the full impact of accountability,” Holland Michel writes.

The only ones who seem likely to face no consequences when AI fails in war are the companies supplying the technology.

It helps companies when the rules the US has set to govern AI in warfare are mere recommendations, not laws. That makes it really hard to hold anyone accountable. Even the AI Act, the EU’s sweeping upcoming regulation for high-risk AI systems, exempts military uses, which arguably are the highest-risk applications of them all.

While everyone is looking for exciting new uses for generative AI, I personally can’t wait for it to become boring.

Amid early signs that people are starting to lose interest in the technology, companies might find that these sorts of tools are better suited for mundane, low-risk applications than solving humanity’s biggest problems.

Applying AI in, for example, productivity software such as Excel, email, or word processing might not be the sexiest idea, but compared to warfare it’s a relatively low-stakes application, and simple enough to have the potential to actually work as advertised. It could help us do the tedious bits of our jobs faster and better.

Boring AI is unlikely to break as easily and, most important, won’t kill anyone. Hopefully, soon we’ll forget we’re interacting with AI at all. (It wasn’t that long ago when machine translation was an exciting new thing in AI. Now most people don’t even think about its role in powering Google Translate.)

That’s why I’m more confident that organizations like the DoD will find success applying generative AI in administrative and business processes.

Boring AI is not morally complex. It’s not magic. But it works.

Deeper Learning

AI isn’t great at decoding human emotions. So why are regulators targeting the tech?

Amid all the chatter about ChatGPT, artificial general intelligence, and the prospect of robots taking people’s jobs, regulators in the EU and the US have been ramping up warnings against AI and emotion recognition. Emotion recognition is the attempt to identify a person’s feelings or state of mind using AI analysis of video, facial images, or audio recordings.

But why is this a top concern? Western regulators are particularly concerned about China’s use of the technology, and its potential to enable social control. And there’s also evidence that it simply does not work properly. Tate Ryan-Mosley dissected the thorny questions around the technology in last week’s edition of The Technocrat, our weekly newsletter on tech policy.

Bits and Bytes

Meta is preparing to launch free code-generating software

A version of its new LLaMA 2 language model that is able to generate programming code will pose a stiff challenge to similar proprietary code-generating programs from rivals such as OpenAI, Microsoft, and Google. The open-source program is called Code Llama, and its launch is imminent, according to The Information. (The Information)

OpenAI is testing GPT-4 for content moderation

Using the language model to moderate online content could really help alleviate the mental toll content moderation takes on humans. OpenAI says it’s seen some promising first results, although the tech does not outperform highly trained humans. A lot of big, open questions remain, such as whether the tool can be attuned to different cultures and pick up context and nuance. (OpenAI)

Google is working on an AI assistant that offers life advice

The generative AI tools could function as a life coach, offering up ideas, planning instructions, and tutoring tips. (The New York Times)

Two tech luminaries have quit their jobs to build AI systems inspired by bees

Sakana, a new AI research lab, draws inspiration from the animal kingdom. Founded by two prominent industry researchers and former Googlers, the company plans to make multiple smaller AI models that work together, the idea being that a “swarm” of programs could be as powerful as a single large AI model. (Bloomberg)