Tech

What went so wrong with covid in India? Everything.

Published

4 years agoon

By

Terry Power

But voices like his were drowned out by the federal government’s messaging, which suggested that India had somehow outwitted the virus. The hype was so strong that even some medical professionals bought into it. A Harvard Medical School professor told the financial daily Mint that “the pandemic has behaved in a very unique way in India.”

“The real harm in undercounting is that people will take the pandemic lightly,” says Arun. “If supposedly few people are dying due to covid, the public will think it doesn’t kill, and they won’t change their behavior.” In fact, by mid-December India had reached yet another somber milestone: it recorded its 10 millionth infection. It was only the second country to do so, after the US.

The government hadn’t used the first lockdown wisely, but December was its chance to set things right, says Gagandeep Kang, a professor of microbiology at the Christian Medical College in Vellore, Tamil Nadu. She says that a number of tactics—ramping up sequencing, studying public behavior, collecting more data, refusing permission for superspreader events, and starting the vaccine rollout earlier than planned—would have saved many lives during the now-inevitable second wave.

Instead, she says, the government continued its “top-down approach,” in which bureaucrats rather than scientists and health-care professionals were making decisions.

“We live in a very unequal society,” she says. “So we need to engage people and build partnerships at a granular level if we are to effectively deliver information and resources.”

In December the government of Goa let its guard down entirely. The state is heavily reliant on tourism, which makes up nearly 17% of its income. The bulk of the tourists show up in December to celebrate Christmas and New Year on sandy beaches with raves and fireworks.

Vivek Menezes, a Goan journalist, says that the state’s reputation as “the place to be” had not faded during the pandemic. “It’s the place for India’s wealthy and for Bollywood, and therefore it’s the place for India,” Menezes says. The pandemic had kept foreign tourists from visiting, but domestic holidaymakers poured in. Some states, such as Maharashtra, had placed restrictions at their borders; others, like Kerala, had a strict policy of contact tracing. In Goa, visitors didn’t even have to show a negative covid test. And the state’s masking policy extended only to health-care workers, visitors to health-care facilities, and people showing symptoms. “Goa was left to the dogs,” says Menezes.

The world’s largest superspreader

India started 2021 having registered nearly 150,000 deaths. Only then, in January, did the government place its first vaccine order, and it was for a shockingly low amount—just 11 million doses of Covishield, the Indian version of the AstraZeneca vaccine. It also ordered 5.5 million doses of Covaxin, a locally developed vaccine that has yet to publish efficacy data. Those orders fell far short of what the country actually needed. Subhash Salunke, a senior advisor to the independent Public Health Foundation of India, estimates that 1.4 billion doses would have been required to fully vaccinate all eligible adults.

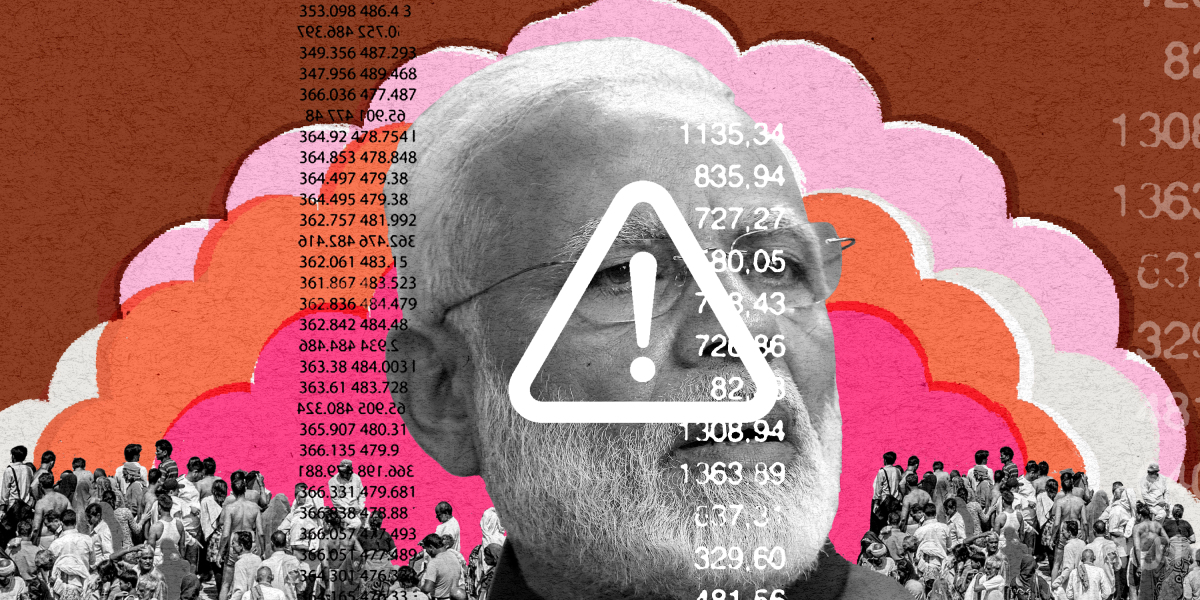

On January 28, in an address to the World Economic Forum in Davos, Modi declared that India had “saved humanity from a big disaster by containing corona effectively.” His government then gave the go-ahead for the Kumbh Mela, a Hindu festival that attracts crushing throngs of millions of people to the holy city of Haridwar in the northern state of Uttarakhand, which is famous for its temples and pilgrimage sites. When the state’s former chief minister suggested that the festival should be “symbolic” this year given the circumstances, he was fired.

A senior politician in the prime minister’s Bharatiya Janata Party told the Indian magazine The Caravan that the federal government had its eye on the forthcoming state elections and didn’t want to lose the support of religious leaders. As it turned out, the Kumbh wasn’t just any superspreader event—with a reported 9.1 million people in attendance, it was the world’s largest superspreader event. “Any person with a basic textbook on public health would have told you this was not the time,” says Kang.

The Indian government only placed its first vaccine order in January 2021, after having registered nearly 150,000 deaths. Even then, it was for a shockingly low amount—11 million doses of Covishield and 5.5 million doses of Covaxin for a country of 1.3 billion.

In February Salunke, the public health expert, was working in an agrarian district in the western state of Maharashtra when he noticed that the virus was transmitting “much faster” than before. It was affecting entire families.

“I felt we were dealing with an agent that had changed or appeared to have changed,” he says. “I started to investigate.” Salunke, it now turns out, had found one mutation of a variant that had been detected in India the previous October. He suspected that the variant, now known as delta, was about to run rampant. It did. It is now in more than 90 countries.

“I went to all those who are responsible and those who matter—whether district level officials or bureaucrats at the central level, you name it. Everyone who I knew I immediately shared this information with,” he says.

Salunke’s discovery doesn’t appear to have affected the official response. Even as the second wave was accelerating and after the WHO designated the new mutation “a variant of interest” on April 4, Modi kept up his hectic schedule ahead of state elections in West Bengal, personally appearing at numerous public rallies.

At one point he gloated about the size of the crowd he had attracted: “In all directions I see huge crowds of people … I have never seen such crowds at a rally.”

“The rallies were a direct message from the leadership that the virus was gone,” says Laxminarayan of the Center for Disease Dynamics, Economics & Policy.

The second wave filled hospitals, which quickly ran out of beds, oxygen, and medication, forcing gasping patients to wait—and then die—in homes, in parking lots, and on sidewalks. Crematoriums had to build makeshift pyres to keep up with the demand, and there were reports that the outpouring of ash drifted so far it stained clothes a kilometer away. Many poor people couldn’t even afford to pay for funeral rites and immersed the bodies of their loved ones directly into the River Ganges, which led hundreds of corpses to wash up on the banks in several states. Alongside these apocalyptic scenes came the news that deadly fungal infections were overwhelming covid patients, likely as a result of lower infection control and overreliance on steroids in treating the virus.

Chaos continues; Delta spreads

And all along, there has been Modi. The prime minister had been the face of India’s fight against the pandemic—literally: his headshot appears prominently on the certificate given to people who get their vaccine. But after the second wave, his premature triumphalism was mocked and his lack of preparedness derided widely. Since then, he has gone largely missing from the public eye, leaving it to colleagues to place the blame elsewhere, most notably—and inaccurately—on the government’s political opposition. As a result, Indians have been left to face the biggest national crisis of their lifetime on their own.

This abandonment has created a sense of camaraderie among some groups of Indians, with many using social media and WhatsApp to help each other out by sharing information about hospital beds and oxygen cylinders. They have also organized on the ground, distributing meals to those in need.

“The [BJP] rallies were a direct message from the leadership that the virus was gone.”

Ramanan Laxminarayan, the Center for Disease Dynamics, Economics & Policy

But the leadership vacuum has also produced a huge market for profiteers and scammers at the highest levels. In May, opposition politicians accused a leader of the ruling BJP party, Tejaswi Surya, of taking part in a vaccine commission scam. And the health minister of Goa, Vishwajit Rane, was forced to deny claims that he played a part in a scam involving the purchase of ventilators. Even the prime minister’s signature covid relief fund, PM Cares, came under fire after it spent Rs 2,250 crore (over $300 million) on 60,000 ventilators that doctors later complained were faulty and “too risky to use.” The fund, which attracted at least $423 million in donations, has also raised concerns about corruption and lack of transparency.

A successful vaccination agenda might have helped erase the memory of the string of missteps, but under Modi it has only been one technocratic mistake after another. At the end of May, with far fewer vaccines in hand than it needs, the government announced plans to start mixing doses of different vaccine types. And at the height of the second wave, it introduced Co-WIN, an online booking system that was mandatory for anyone under 45 who was trying to get vaccinated. The system, which had been under scrutiny for months, was disastrous: not only did it automatically exclude those who do not use computers and smartphones, but it was also hit by bugs and overwhelmed by people desperate to get protection.

You may like

-

Decoding the data of the Chinese mpox outbreak

-

China is suddenly dealing with another public health crisis: mpox

-

Covid hasn’t entirely gone away—here’s where we stand

-

BioNTech Heading To Court Over COVID Vaccine Side Effects Suit In Germany

-

Menopause And Long COVID: Implications For Women’s Health

-

New Studies Discover Potential Treatment Pathways For Long COVID

My senior spring in high school, I decided to defer my MIT enrollment by a year. I had always planned to take a gap year, but after receiving the silver tube in the mail and seeing all my college-bound friends plan out their classes and dorm decor, I got cold feet. Every time I mentioned my plans, I was met with questions like “But what about school?” and “MIT is cool with this?”

Yeah. MIT totally is. Postponing your MIT start date is as simple as clicking a checkbox.

COURTESY PHOTO

Now, having finished my first year of classes, I’m really grateful that I stuck with my decision to delay MIT, as I realized that having a full year of unstructured time is a gift. I could let my creative juices run. Pick up hobbies for fun. Do cool things like work at an AI startup and teach myself how to create latte art. My favorite part of the year, however, was backpacking across Europe. I traveled through Austria, Slovakia, Russia, Spain, France, the UK, Greece, Italy, Germany, Poland, Romania, and Hungary.

Moreover, despite my fear that I’d be losing a valuable year, traveling turned out to be the most productive thing I could have done with my time. I got to explore different cultures, meet new people from all over the world, and gain unique perspectives that I couldn’t have gotten otherwise. My travels throughout Europe allowed me to leave my comfort zone and expand my understanding of the greater human experience.

“In Iceland there’s less focus on hustle culture, and this relaxed approach to work-life balance ends up fostering creativity. This was a wild revelation to a bunch of MIT students.”

When I became a full-time student last fall, I realized that StartLabs, the premier undergraduate entrepreneurship club on campus, gives MIT undergrads a similar opportunity to expand their horizons and experience new things. I immediately signed up. At StartLabs, we host fireside chats and ideathons throughout the year. But our flagship event is our annual TechTrek over spring break. In previous years, StartLabs has gone on TechTrek trips to Germany, Switzerland, and Israel. On these fully funded trips, StartLabs members have visited and collaborated with industry leaders, incubators, startups, and academic institutions. They take these treks both to connect with the global startup sphere and to build closer relationships within the club itself.

Most important, however, the process of organizing the TechTrek is itself an expedited introduction to entrepreneurship. The trip is entirely planned by StartLabs members; we figure out travel logistics, find sponsors, and then discover ways to optimize our funding.

COURTESY PHOTO

In organizing this year’s trip to Iceland, we had to learn how to delegate roles to all the planners and how to maintain morale when making this trip a reality seemed to be an impossible task. We woke up extra early to take 6 a.m. calls with Icelandic founders and sponsors. We came up with options for different levels of sponsorship, used pattern recognition to deduce the email addresses of hundreds of potential contacts at organizations we wanted to visit, and all got scrappy with utilizing our LinkedIn connections.

And as any good entrepreneur must, we had to learn how to be lean and maximize our resources. To stretch our food budget, we planned all our incubator and company visits around lunchtime in hopes of getting fed, played human Tetris as we fit 16 people into a six-person Airbnb, and emailed grocery stores to get their nearly expired foods for a discount. We even made a deal with the local bus company to give us free tickets in exchange for a story post on our Instagram account.

Tech

The Download: spying keyboard software, and why boring AI is best

Published

2 years agoon

22 August 2023By

Terry Power

This is today’s edition of The Download, our weekday newsletter that provides a daily dose of what’s going on in the world of technology.

How ubiquitous keyboard software puts hundreds of millions of Chinese users at risk

For millions of Chinese people, the first software they download onto devices is always the same: a keyboard app. Yet few of them are aware that it may make everything they type vulnerable to spying eyes.

QWERTY keyboards are inefficient as many Chinese characters share the same latinized spelling. As a result, many switch to smart, localized keyboard apps to save time and frustration. Today, over 800 million Chinese people use third-party keyboard apps on their PCs, laptops, and mobile phones.

But a recent report by the Citizen Lab, a University of Toronto–affiliated research group, revealed that Sogou, one of the most popular Chinese keyboard apps, had a massive security loophole. Read the full story.

—Zeyi Yang

Why we should all be rooting for boring AI

Earlier this month, the US Department of Defense announced it is setting up a Generative AI Task Force, aimed at “analyzing and integrating” AI tools such as large language models across the department. It hopes they could improve intelligence and operational planning.

But those might not be the right use cases, writes our senior AI reporter Melissa Heikkila. Generative AI tools, such as language models, are glitchy and unpredictable, and they make things up. They also have massive security vulnerabilities, privacy problems, and deeply ingrained biases.

Applying these technologies in high-stakes settings could lead to deadly accidents where it’s unclear who or what should be held responsible, or even why the problem occurred. The DoD’s best bet is to apply generative AI to more mundane things like Excel, email, or word processing. Read the full story.

This story is from The Algorithm, Melissa’s weekly newsletter giving you the inside track on all things AI. Sign up to receive it in your inbox every Monday.

The ice cores that will let us look 1.5 million years into the past

To better understand the role atmospheric carbon dioxide plays in Earth’s climate cycles, scientists have long turned to ice cores drilled in Antarctica, where snow layers accumulate and compact over hundreds of thousands of years, trapping samples of ancient air in a lattice of bubbles that serve as tiny time capsules.

By analyzing those cores, scientists can connect greenhouse-gas concentrations with temperatures going back 800,000 years. Now, a new European-led initiative hopes to eventually retrieve the oldest core yet, dating back 1.5 million years. But that impressive feat is still only the first step. Once they’ve done that, they’ll have to figure out how they’re going to extract the air from the ice. Read the full story.

—Christian Elliott

This story is from the latest edition of our print magazine, set to go live tomorrow. Subscribe today for as low as $8/month to ensure you receive full access to the new Ethics issue and in-depth stories on experimental drugs, AI assisted warfare, microfinance, and more.

The must-reads

I’ve combed the internet to find you today’s most fun/important/scary/fascinating stories about technology.

1 How AI got dragged into the culture wars

Fears about ‘woke’ AI fundamentally misunderstand how it works. Yet they’re gaining traction. (The Guardian)

+ Why it’s impossible to build an unbiased AI language model. (MIT Technology Review)

2 Researchers are racing to understand a new coronavirus variant

It’s unlikely to be cause for concern, but it shows this virus still has plenty of tricks up its sleeve. (Nature)

+ Covid hasn’t entirely gone away—here’s where we stand. (MIT Technology Review)

+ Why we can’t afford to stop monitoring it. (Ars Technica)

3 How Hilary became such a monster storm

Much of it is down to unusually hot sea surface temperatures. (Wired $)

+ The era of simultaneous climate disasters is here to stay. (Axios)

+ People are donning cooling vests so they can work through the heat. (Wired $)

4 Brain privacy is set to become important

Scientists are getting better at decoding our brain data. It’s surely only a matter of time before others want a peek. (The Atlantic $)

+ How your brain data could be used against you. (MIT Technology Review)

5 How Nvidia built such a big competitive advantage in AI chips

Today it accounts for 70% of all AI chip sales—and an even greater share for training generative models. (NYT $)

+ The chips it’s selling to China are less effective due to US export controls. (Ars Technica)

+ These simple design rules could turn the chip industry on its head. (MIT Technology Review)

6 Inside the complex world of dissociative identity disorder on TikTok

Reducing stigma is great, but doctors fear people are self-diagnosing or even imitating the disorder. (The Verge)

7 What TikTok might have to give up to keep operating in the US

This shows just how hollow the authorities’ purported data-collection concerns really are. (Forbes)

8 Soldiers in Ukraine are playing World of Tanks on their phones

It’s eerily similar to the war they are themselves fighting, but they say it helps them to dissociate from the horror. (NYT $)

9 Conspiracy theorists are sharing mad ideas on what causes wildfires

But it’s all just a convoluted way to try to avoid having to tackle climate change. (Slate $)

10 Christie’s accidentally leaked the location of tons of valuable art

Seemingly thanks to the metadata that often automatically attaches to smartphone photos. (WP $)

Quote of the day

“Is it going to take people dying for something to move forward?”

—An anonymous air traffic controller warns that staffing shortages in their industry, plus other factors, are starting to threaten passenger safety, the New York Times reports.

The big story

Inside effective altruism, where the far future counts a lot more than the present

October 2022

Since its birth in the late 2000s, effective altruism has aimed to answer the question “How can those with means have the most impact on the world in a quantifiable way?”—and supplied methods for calculating the answer.

It’s no surprise that effective altruisms’ ideas have long faced criticism for reflecting white Western saviorism, alongside an avoidance of structural problems in favor of abstract math. And as believers pour even greater amounts of money into the movement’s increasingly sci-fi ideals, such charges are only intensifying. Read the full story.

—Rebecca Ackermann

We can still have nice things

A place for comfort, fun and distraction in these weird times. (Got any ideas? Drop me a line or tweet ’em at me.)

+ Watch Andrew Scott’s electrifying reading of the 1965 commencement address ‘Choose One of Five’ by Edith Sampson.

+ Here’s how Metallica makes sure its live performances ROCK. ($)

+ Cannot deal with this utterly ludicrous wooden vehicle.

+ Learn about a weird and wonderful new instrument called a harpejji.

Tech

Why we should all be rooting for boring AI

Published

2 years agoon

22 August 2023By

Terry Power

This story originally appeared in The Algorithm, our weekly newsletter on AI. To get stories like this in your inbox first, sign up here.

I’m back from a wholesome week off picking blueberries in a forest. So this story we published last week about the messy ethics of AI in warfare is just the antidote, bringing my blood pressure right back up again.

Arthur Holland Michel does a great job looking at the complicated and nuanced ethical questions around warfare and the military’s increasing use of artificial-intelligence tools. There are myriad ways AI could fail catastrophically or be abused in conflict situations, and there don’t seem to be any real rules constraining it yet. Holland Michel’s story illustrates how little there is to hold people accountable when things go wrong.

Last year I wrote about how the war in Ukraine kick-started a new boom in business for defense AI startups. The latest hype cycle has only added to that, as companies—and now the military too—race to embed generative AI in products and services.

Earlier this month, the US Department of Defense announced it is setting up a Generative AI Task Force, aimed at “analyzing and integrating” AI tools such as large language models across the department.

The department sees tons of potential to “improve intelligence, operational planning, and administrative and business processes.”

But Holland Michel’s story highlights why the first two use cases might be a bad idea. Generative AI tools, such as language models, are glitchy and unpredictable, and they make things up. They also have massive security vulnerabilities, privacy problems, and deeply ingrained biases.

Applying these technologies in high-stakes settings could lead to deadly accidents where it’s unclear who or what should be held responsible, or even why the problem occurred. Everyone agrees that humans should make the final call, but that is made harder by technology that acts unpredictably, especially in fast-moving conflict situations.

Some worry that the people lowest on the hierarchy will pay the highest price when things go wrong: “In the event of an accident—regardless of whether the human was wrong, the computer was wrong, or they were wrong together—the person who made the ‘decision’ will absorb the blame and protect everyone else along the chain of command from the full impact of accountability,” Holland Michel writes.

The only ones who seem likely to face no consequences when AI fails in war are the companies supplying the technology.

It helps companies when the rules the US has set to govern AI in warfare are mere recommendations, not laws. That makes it really hard to hold anyone accountable. Even the AI Act, the EU’s sweeping upcoming regulation for high-risk AI systems, exempts military uses, which arguably are the highest-risk applications of them all.

While everyone is looking for exciting new uses for generative AI, I personally can’t wait for it to become boring.

Amid early signs that people are starting to lose interest in the technology, companies might find that these sorts of tools are better suited for mundane, low-risk applications than solving humanity’s biggest problems.

Applying AI in, for example, productivity software such as Excel, email, or word processing might not be the sexiest idea, but compared to warfare it’s a relatively low-stakes application, and simple enough to have the potential to actually work as advertised. It could help us do the tedious bits of our jobs faster and better.

Boring AI is unlikely to break as easily and, most important, won’t kill anyone. Hopefully, soon we’ll forget we’re interacting with AI at all. (It wasn’t that long ago when machine translation was an exciting new thing in AI. Now most people don’t even think about its role in powering Google Translate.)

That’s why I’m more confident that organizations like the DoD will find success applying generative AI in administrative and business processes.

Boring AI is not morally complex. It’s not magic. But it works.

Deeper Learning

AI isn’t great at decoding human emotions. So why are regulators targeting the tech?

Amid all the chatter about ChatGPT, artificial general intelligence, and the prospect of robots taking people’s jobs, regulators in the EU and the US have been ramping up warnings against AI and emotion recognition. Emotion recognition is the attempt to identify a person’s feelings or state of mind using AI analysis of video, facial images, or audio recordings.

But why is this a top concern? Western regulators are particularly concerned about China’s use of the technology, and its potential to enable social control. And there’s also evidence that it simply does not work properly. Tate Ryan-Mosley dissected the thorny questions around the technology in last week’s edition of The Technocrat, our weekly newsletter on tech policy.

Bits and Bytes

Meta is preparing to launch free code-generating software

A version of its new LLaMA 2 language model that is able to generate programming code will pose a stiff challenge to similar proprietary code-generating programs from rivals such as OpenAI, Microsoft, and Google. The open-source program is called Code Llama, and its launch is imminent, according to The Information. (The Information)

OpenAI is testing GPT-4 for content moderation

Using the language model to moderate online content could really help alleviate the mental toll content moderation takes on humans. OpenAI says it’s seen some promising first results, although the tech does not outperform highly trained humans. A lot of big, open questions remain, such as whether the tool can be attuned to different cultures and pick up context and nuance. (OpenAI)

Google is working on an AI assistant that offers life advice

The generative AI tools could function as a life coach, offering up ideas, planning instructions, and tutoring tips. (The New York Times)

Two tech luminaries have quit their jobs to build AI systems inspired by bees

Sakana, a new AI research lab, draws inspiration from the animal kingdom. Founded by two prominent industry researchers and former Googlers, the company plans to make multiple smaller AI models that work together, the idea being that a “swarm” of programs could be as powerful as a single large AI model. (Bloomberg)